The tradeoffs between constructing bespoke code-based brokers and the main agent frameworks.

Due to John Gilhuly for his contributions to this piece.

Brokers are having a second. With a number of new frameworks and contemporary funding within the area, fashionable AI brokers are overcoming shaky origins to quickly supplant RAG as an implementation precedence. So will 2024 lastly be the 12 months that autonomous AI methods that may take over writing our emails, reserving flights, speaking to our information, or seemingly every other activity?

Perhaps, however a lot work stays to get to that time. Any developer constructing an agent should not solely select foundations — which mannequin, use case, and structure to make use of — but in addition which framework to leverage. Do you go together with the long-standing LangGraph, or the newer entrant LlamaIndex Workflows? Or do you go the normal route and code the entire thing your self?

This submit goals to make that selection a bit simpler. Over the previous few weeks, I constructed the identical agent in main frameworks to look at among the strengths and weaknesses of every at a technical degree. The entire code for every agent is offered in this repo.

Background on the Agent Used for Testing

The agent used for testing consists of operate calling, a number of instruments or expertise, connections to outdoors sources, and shared state or reminiscence.

The agent has the next capabilities:

- Answering questions from a data base

- Speaking to information: answering questions on telemetry information of an LLM utility

- Analyzing information: analyzing higher-level traits and patterns in retrieved telemetry information

So as to accomplish these, the agent has three beginning expertise: RAG with product documentation, SQL era on a hint database, and information evaluation. A easy gradio-powered interface is used for the agent UI, with the agent itself structured as a chatbot.

Code-Based mostly Agent (No Framework)

The primary possibility you’ve when creating an agent is to skip the frameworks completely and construct the agent absolutely your self. When embarking on this mission, this was the method I began with.

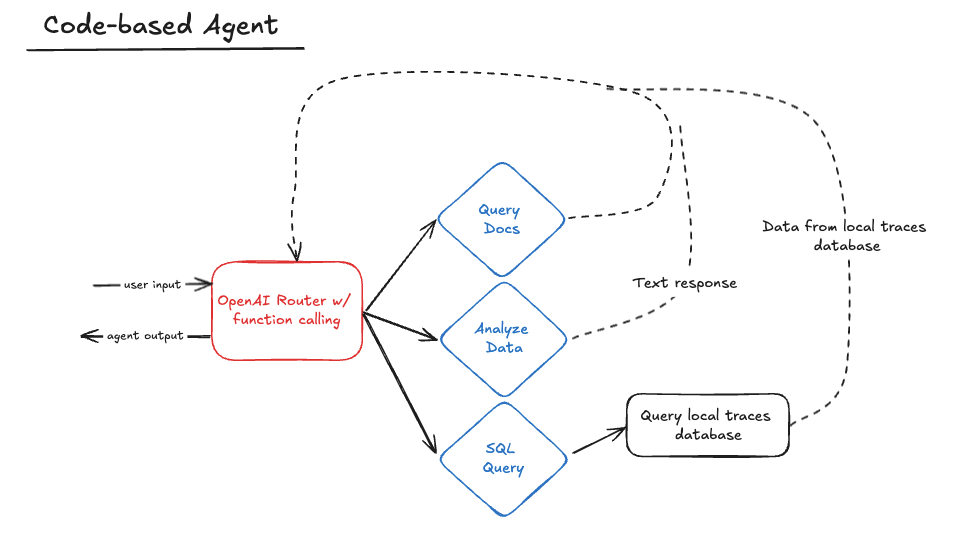

Pure Code Structure

The code-based agent beneath is made up of an OpenAI-powered router that makes use of operate calling to pick the correct talent to make use of. After that talent completes, it returns again to the router to both name one other talent or reply to the consumer.

The agent retains an ongoing checklist of messages and responses that’s handed absolutely into the router on every name to protect context by way of cycles.

def router(messages):

if not any(

isinstance(message, dict) and message.get("function") == "system" for message in messages

):

system_prompt = {"function": "system", "content material": SYSTEM_PROMPT}

messages.append(system_prompt)

response = consumer.chat.completions.create(

mannequin="gpt-4o",

messages=messages,

instruments=skill_map.get_combined_function_description_for_openai(),

)

messages.append(response.selections[0].message)

tool_calls = response.selections[0].message.tool_calls

if tool_calls:

handle_tool_calls(tool_calls, messages)

return router(messages)

else:

return response.selections[0].message.content material

The talents themselves are outlined in their very own lessons (e.g. GenerateSQLQuery) which might be collectively held in a SkillMap. The router itself solely interacts with the SkillMap, which it makes use of to load talent names, descriptions, and callable capabilities. This method signifies that including a brand new talent to the agent is so simple as writing that talent as its personal class, then including it to the checklist of expertise within the SkillMap. The thought right here is to make it simple so as to add new expertise with out disturbing the router code.

class SkillMap:

def __init__(self):

expertise = [AnalyzeData(), GenerateSQLQuery()]

self.skill_map = {}

for talent in expertise:

self.skill_map[skill.get_function_name()] = (

talent.get_function_dict(),

talent.get_function_callable(),

)

def get_function_callable_by_name(self, skill_name) -> Callable:

return self.skill_map[skill_name][1]

def get_combined_function_description_for_openai(self):

combined_dict = []

for _, (function_dict, _) in self.skill_map.gadgets():

combined_dict.append(function_dict)

return combined_dict

def get_function_list(self):

return checklist(self.skill_map.keys())

def get_list_of_function_callables(self):

return [skill[1] for talent in self.skill_map.values()]

def get_function_description_by_name(self, skill_name):

return str(self.skill_map[skill_name][0]["function"])

Total, this method is pretty easy to implement however comes with a couple of challenges.

Challenges with Pure Code Brokers

The primary problem lies in structuring the router system immediate. Typically, the router within the instance above insisted on producing SQL itself as an alternative of delegating that to the correct talent. When you’ve ever tried to get an LLM not to do one thing, you know the way irritating that have might be; discovering a working immediate took many rounds of debugging. Accounting for the totally different output codecs from every step was additionally difficult. Since I opted to not use structured outputs, I needed to be prepared for a number of totally different codecs from every of the LLM calls in my router and expertise.

Advantages of a Pure Code Agent

A code-based method gives a superb baseline and start line, providing a good way to learn the way brokers work with out counting on canned agent tutorials from prevailing frameworks. Though convincing the LLM to behave might be difficult, the code construction itself is straightforward sufficient to make use of and may make sense for sure use circumstances (extra within the evaluation part beneath).

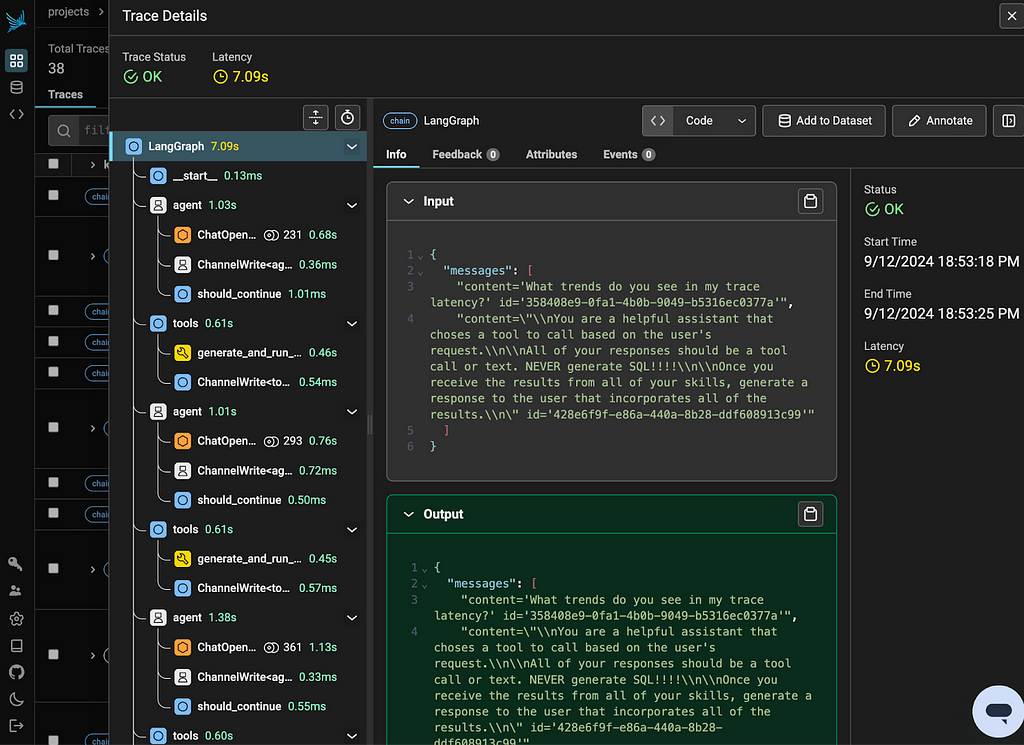

LangGraph

LangGraph is without doubt one of the longest-standing agent frameworks, first releasing in January 2024. The framework is constructed to handle the acyclic nature of current pipelines and chains by adopting a Pregel graph construction as an alternative. LangGraph makes it simpler to outline loops in your agent by including the ideas of nodes, edges, and conditional edges to traverse a graph. LangGraph is constructed on high of LangChain, and makes use of the objects and kinds from that framework.

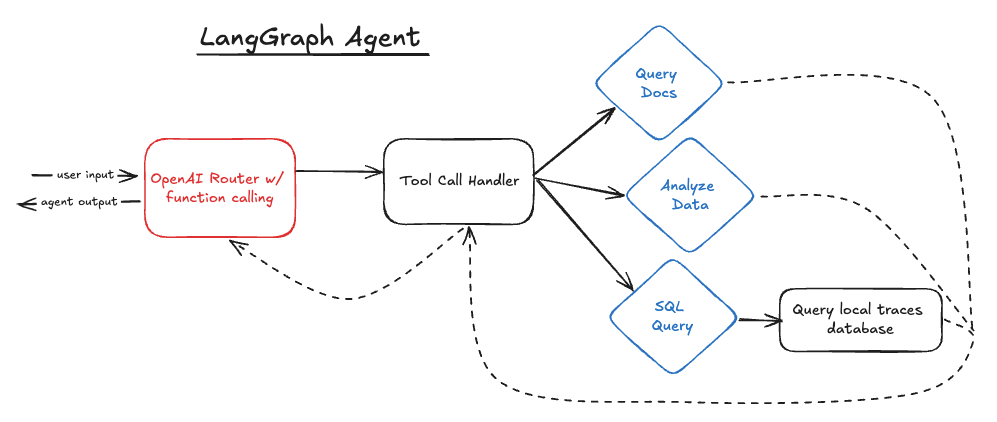

LangGraph Structure

The LangGraph agent appears to be like just like the code-based agent on paper, however the code behind it’s drastically totally different. LangGraph nonetheless makes use of a “router” technically, in that it calls OpenAI with capabilities and makes use of the response to proceed to a brand new step. Nevertheless the way in which this system strikes between expertise is managed fully otherwise.

instruments = [generate_and_run_sql_query, data_analyzer]

mannequin = ChatOpenAI(mannequin="gpt-4o", temperature=0).bind_tools(instruments)

def create_agent_graph():

workflow = StateGraph(MessagesState)

tool_node = ToolNode(instruments)

workflow.add_node("agent", call_model)

workflow.add_node("instruments", tool_node)

workflow.add_edge(START, "agent")

workflow.add_conditional_edges(

"agent",

should_continue,

)

workflow.add_edge("instruments", "agent")

checkpointer = MemorySaver()

app = workflow.compile(checkpointer=checkpointer)

return app

The graph outlined right here has a node for the preliminary OpenAI name, referred to as “agent” above, and one for the software dealing with step, referred to as “instruments.” LangGraph has a built-in object referred to as ToolNode that takes a listing of callable instruments and triggers them primarily based on a ChatMessage response, earlier than returning to the “agent” node once more.

def should_continue(state: MessagesState):

messages = state["messages"]

last_message = messages[-1]

if last_message.tool_calls:

return "instruments"

return END

def call_model(state: MessagesState):

messages = state["messages"]

response = mannequin.invoke(messages)

return {"messages": [response]}

After every name of the “agent” node (put one other method: the router within the code-based agent), the should_continue edge decides whether or not to return the response to the consumer or move on to the ToolNode to deal with software calls.

All through every node, the “state” shops the checklist of messages and responses from OpenAI, just like the code-based agent’s method.

Challenges with LangGraph

A lot of the difficulties with LangGraph within the instance stem from the necessity to use Langchain objects for issues to move properly.

Problem #1: Perform Name Validation

So as to use the ToolNode object, I needed to refactor most of my current Talent code. The ToolNode takes a listing of callable capabilities, which initially made me suppose I may use my current capabilities, nonetheless issues broke down attributable to my operate parameters.

The talents have been outlined as lessons with a callable member operate, that means that they had “self” as their first parameter. GPT-4o was sensible sufficient to not embrace the “self” parameter within the generated operate name, nonetheless LangGraph learn this as a validation error attributable to a lacking parameter.

This took hours to determine, as a result of the error message as an alternative marked the third parameter within the operate (“args” on the info evaluation talent) because the lacking parameter:

pydantic.v1.error_wrappers.ValidationError: 1 validation error for data_analysis_toolSchema

args discipline required (sort=value_error.lacking)

It’s price mentioning that the error message originated from Pydantic, not from LangGraph.

I ultimately bit the bullet and redefined my expertise as primary strategies with Langchain’s @software decorator, and was capable of get issues working.

@software

def generate_and_run_sql_query(question: str):

"""Generates and runs an SQL question primarily based on the immediate.

Args:

question (str): A string containing the unique consumer immediate.

Returns:

str: The results of the SQL question.

"""

Problem #2: Debugging

As talked about, debugging in a framework is tough. This primarily comes right down to complicated error messages and abstracted ideas that make it tougher to view variables.

The abstracted ideas primarily present up when attempting to debug the messages being despatched across the agent. LangGraph shops these messages in state[“messages”]. Some nodes inside the graph pull from these messages routinely, which may make it obscure the worth of messages when they’re accessed by the node.

LangGraph Advantages

One of many primary advantages of LangGraph is that it’s simple to work with. The graph construction code is clear and accessible. Particularly if in case you have complicated node logic, having a single view of the graph makes it simpler to know how the agent is related collectively. LangGraph additionally makes it easy to transform an current utility in-built LangChain.

Takeaway

When you use every thing within the framework, LangGraph works cleanly; for those who step outdoors of it, put together for some debugging complications.

LlamaIndex Workflows

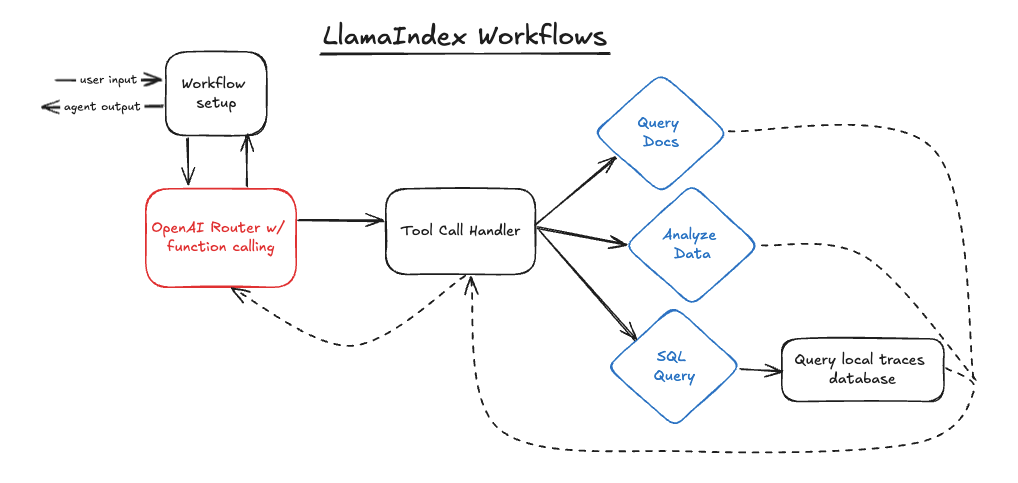

Workflows is a more moderen entrant into the agent framework area, premiering earlier this summer time. Like LangGraph, it goals to make looping brokers simpler to construct. Workflows additionally has a selected concentrate on working asynchronously.

Some parts of Workflows appear to be in direct response to LangGraph, particularly its use of occasions as an alternative of edges and conditional edges. Workflows use steps (analogous to nodes in LangGraph) to accommodate logic, and emitted and obtained occasions to maneuver between steps.

The construction above appears to be like just like the LangGraph construction, save for one addition. I added a setup step to the Workflow to organize the agent context, extra on this beneath. Regardless of the same construction, there may be very totally different code powering it.

Workflows Structure

The code beneath defines the Workflow construction. Much like LangGraph, that is the place I ready the state and hooked up the abilities to the LLM object.

class AgentFlow(Workflow):

def __init__(self, llm, timeout=300):

tremendous().__init__(timeout=timeout)

self.llm = llm

self.reminiscence = ChatMemoryBuffer(token_limit=1000).from_defaults(llm=llm)

self.instruments = []

for func in skill_map.get_function_list():

self.instruments.append(

FunctionTool(

skill_map.get_function_callable_by_name(func),

metadata=ToolMetadata(

identify=func, description=skill_map.get_function_description_by_name(func)

),

)

)

@step

async def prepare_agent(self, ev: StartEvent) -> RouterInputEvent:

user_input = ev.enter

user_msg = ChatMessage(function="consumer", content material=user_input)

self.reminiscence.put(user_msg)

chat_history = self.reminiscence.get()

return RouterInputEvent(enter=chat_history)

That is additionally the place I outline an additional step, “prepare_agent”. This step creates a ChatMessage from the consumer enter and provides it to the workflow reminiscence. Splitting this out as a separate step signifies that we do return to it because the agent loops by way of steps, which avoids repeatedly including the consumer message to the reminiscence.

Within the LangGraph case, I achieved the identical factor with a run_agent methodology that lived outdoors the graph. This variation is generally stylistic, nonetheless it’s cleaner for my part to accommodate this logic with the Workflow and graph as we’ve accomplished right here.

With the Workflow arrange, I then outlined the routing code:

@step

async def router(self, ev: RouterInputEvent) -> ToolCallEvent | StopEvent:

messages = ev.enter

if not any(

isinstance(message, dict) and message.get("function") == "system" for message in messages

):

system_prompt = ChatMessage(function="system", content material=SYSTEM_PROMPT)

messages.insert(0, system_prompt)

with using_prompt_template(template=SYSTEM_PROMPT, model="v0.1"):

response = await self.llm.achat_with_tools(

mannequin="gpt-4o",

messages=messages,

instruments=self.instruments,

)

self.reminiscence.put(response.message)

tool_calls = self.llm.get_tool_calls_from_response(response, error_on_no_tool_call=False)

if tool_calls:

return ToolCallEvent(tool_calls=tool_calls)

else:

return StopEvent(outcome=response.message.content material)

And the software name dealing with code:

@step

async def tool_call_handler(self, ev: ToolCallEvent) -> RouterInputEvent:

tool_calls = ev.tool_calls

for tool_call in tool_calls:

function_name = tool_call.tool_name

arguments = tool_call.tool_kwargs

if "enter" in arguments:

arguments["prompt"] = arguments.pop("enter")

strive:

function_callable = skill_map.get_function_callable_by_name(function_name)

besides KeyError:

function_result = "Error: Unknown operate name"

function_result = function_callable(arguments)

message = ChatMessage(

function="software",

content material=function_result,

additional_kwargs={"tool_call_id": tool_call.tool_id},

)

self.reminiscence.put(message)

return RouterInputEvent(enter=self.reminiscence.get())

Each of those look extra just like the code-based agent than the LangGraph agent. That is primarily as a result of Workflows retains the conditional routing logic within the steps versus in conditional edges — strains 18–24 have been a conditional edge in LangGraph, whereas now they’re simply a part of the routing step — and the truth that LangGraph has a ToolNode object that does nearly every thing within the tool_call_handler methodology routinely.

Transferring previous the routing step, one factor I used to be very joyful to see is that I may use my SkillMap and current expertise from my code-based agent with Workflows. These required no modifications to work with Workflows, which made my life a lot simpler.

Challenges with Workflows

Problem #1: Sync vs Async

Whereas asynchronous execution is preferable for a stay agent, debugging a synchronous agent is way simpler. Workflows is designed to work asynchronously, and attempting to drive synchronous execution was very tough.

I initially thought I’d simply be capable to take away the “async” methodology designations and change from “achat_with_tools” to “chat_with_tools”. Nevertheless, because the underlying strategies inside the Workflow class have been additionally marked as asynchronous, it was essential to redefine these so as to run synchronously. I ended up sticking to an asynchronous method, however this didn’t make debugging harder.

Problem #2: Pydantic Validation Errors

In a repeat of the woes with LangGraph, related issues emerged round complicated Pydantic validation errors on expertise. Happily, these have been simpler to handle this time since Workflows was capable of deal with member capabilities simply wonderful. I in the end simply ended up having to be extra prescriptive in creating LlamaIndex FunctionTool objects for my expertise:

for func in skill_map.get_function_list():

self.instruments.append(FunctionTool(

skill_map.get_function_callable_by_name(func),

metadata=ToolMetadata(identify=func, description=skill_map.get_function_description_by_name(func))))

Excerpt from AgentFlow.__init__ that builds FunctionTools

Advantages of Workflows

I had a a lot simpler time constructing the Workflows agent than I did the LangGraph agent, primarily as a result of Workflows nonetheless required me to write down routing logic and power dealing with code myself as an alternative of offering built-in capabilities. This additionally meant that my Workflow agent regarded extraordinarily just like my code-based agent.

The largest distinction got here in using occasions. I used two customized occasions to maneuver between steps in my agent:

class ToolCallEvent(Occasion):

tool_calls: checklist[ToolSelection]

class RouterInputEvent(Occasion):

enter: checklist[ChatMessage]

The emitter-receiver, event-based structure took the place of immediately calling among the strategies in my agent, just like the software name handler.

When you’ve got extra complicated methods with a number of steps which might be triggering asynchronously and may emit a number of occasions, this structure turns into very useful to handle that cleanly.

Different advantages of Workflows embrace the truth that it is extremely light-weight and doesn’t drive a lot construction on you (except for using sure LlamaIndex objects) and that its event-based structure gives a useful various to direct operate calling — particularly for complicated, asynchronous purposes.

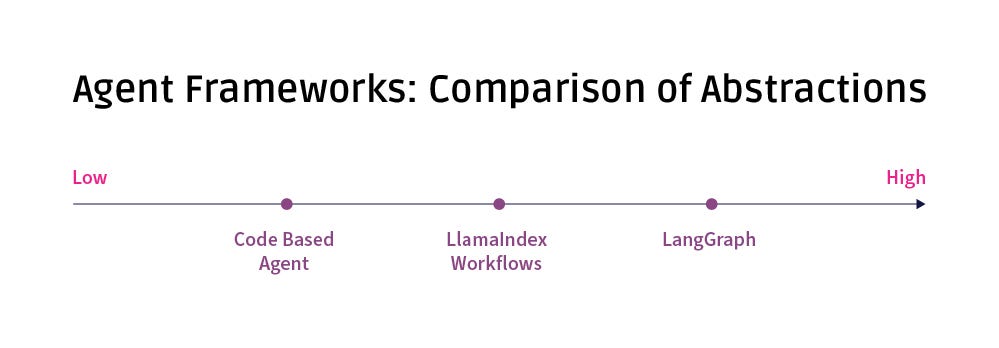

Evaluating Frameworks

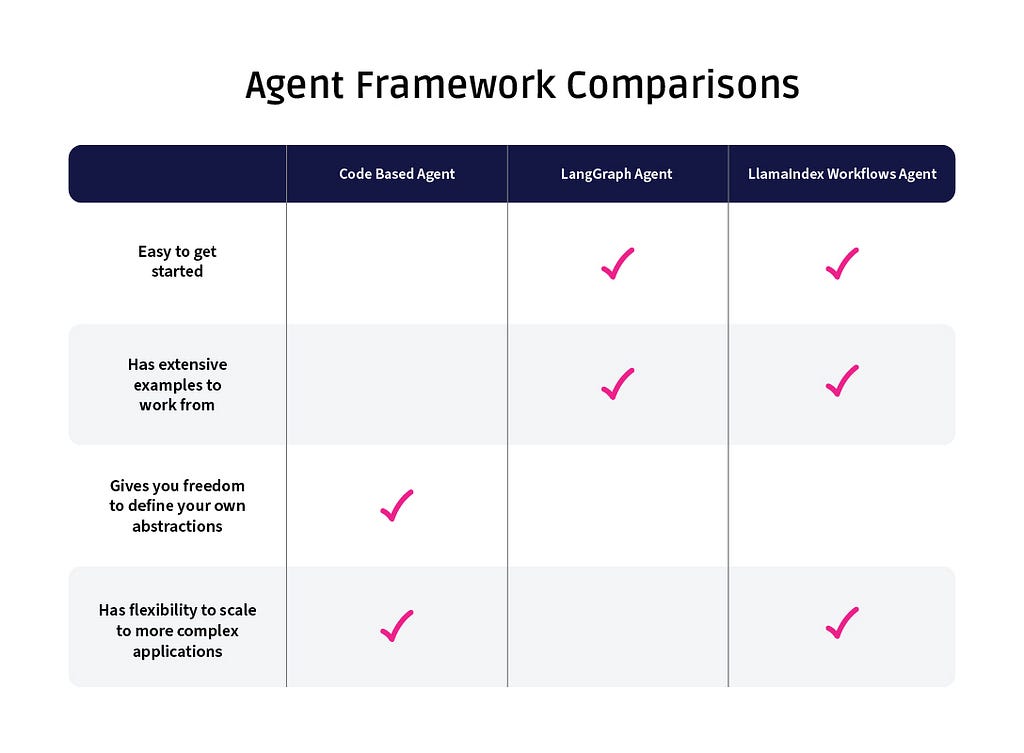

Trying throughout the three approaches, each has its advantages.

The no framework method is the only to implement. As a result of any abstractions are outlined by the developer (i.e. SkillMap object within the above instance), maintaining varied sorts and objects straight is straightforward. The readability and accessibility of the code completely comes right down to the person developer nonetheless, and it’s simple to see how more and more complicated brokers may get messy with out some enforced construction.

LangGraph gives fairly a little bit of construction, which makes the agent very clearly outlined. If a broader staff is collaborating on an agent, this construction would offer a useful method of implementing an structure. LangGraph additionally may present a superb start line with brokers for these not as acquainted with the construction. There’s a tradeoff, nonetheless — since LangGraph does fairly a bit for you, it may result in complications for those who don’t absolutely purchase into the framework; the code could also be very clear, however you could pay for it with extra debugging.

Workflows falls someplace within the center. The event-based structure may be extraordinarily useful for some tasks, and the truth that much less is required by way of utilizing of LlamaIndex sorts gives better flexibility for these not be absolutely utilizing the framework throughout their utility.

Finally, the core query may come right down to “are you already utilizing LlamaIndex or LangChain to orchestrate your utility?” LangGraph and Workflows are each so entwined with their respective underlying frameworks that the extra advantages of every agent-specific framework may not trigger you to change on benefit alone.

The pure code method will possible all the time be a pretty possibility. When you’ve got the rigor to doc and implement any abstractions created, then making certain nothing in an exterior framework slows you down is simple.

Key Questions To Assist In Selecting An Agent Framework

In fact, “it relies upon” isn’t a satisfying reply. These three questions ought to make it easier to determine which framework to make use of in your subsequent agent mission.

Are you already utilizing LlamaIndex or LangChain for vital items of your mission?

If sure, discover that possibility first.

Are you acquainted with widespread agent constructions, or would you like one thing telling you the way it is best to construction your agent?

When you fall into the latter group, strive Workflows. When you actually fall into the latter group, strive LangGraph.

Has your agent been constructed earlier than?

One of many framework advantages is that there are a lot of tutorials and examples constructed with every. There are far fewer examples of pure code brokers to construct from.

Conclusion

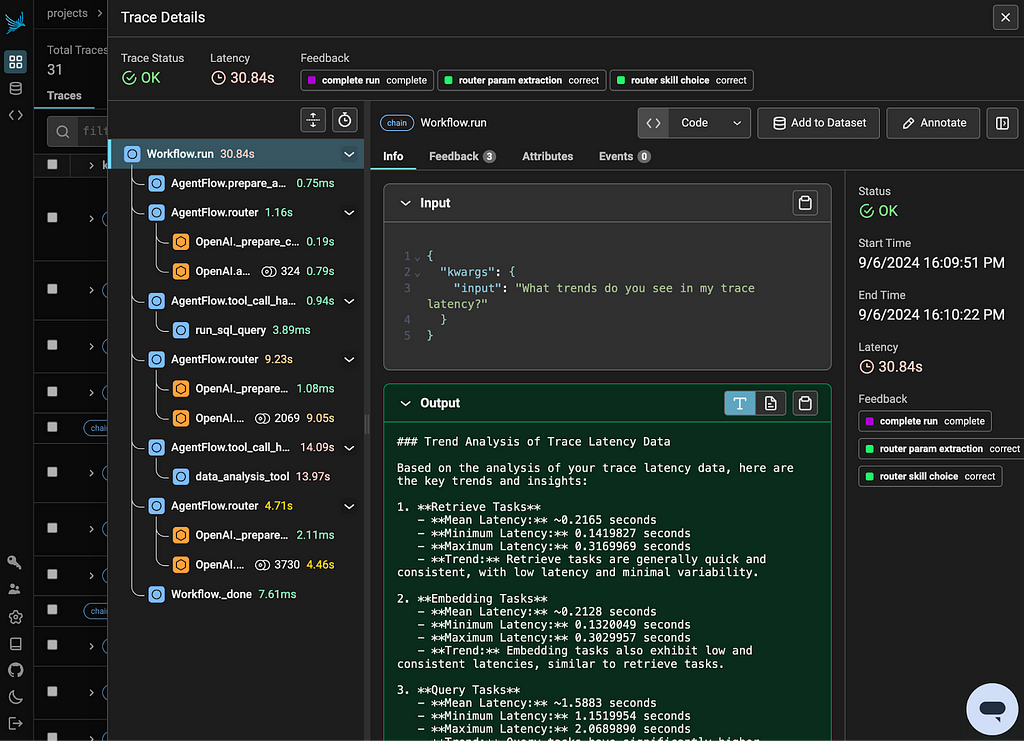

Selecting an agent framework is only one selection amongst many that can affect outcomes in manufacturing for generative AI methods. As all the time, it pays to have strong guardrails and LLM tracing in place — and to be agile as new agent frameworks, analysis, and fashions upend established strategies.

Selecting Between LLM Agent Frameworks was initially revealed in In the direction of Information Science on Medium, the place persons are persevering with the dialog by highlighting and responding to this story.

![[2409.12947] Unrolled denoising networks provably study optimum Bayesian inference](https://i0.wp.com/arxiv.org/static/browse/0.3.4/images/arxiv-logo-fb.png?w=218&resize=218,150&ssl=1)